At the beginning of October, my friend Nic and I released a game called A Night at the Roculus. It was a really stupid weekend project, apparently to the point that it was too stupid for most people to ignore. It got a lot of love from Reddit and the media, and we got to demo with VRLA at IndieCade! And like Floculus Bird and SMS Racing before it, I had my third viral game in 14 months. I’m not going to try and explain my luck, but this time I at least had the foresight to record some player data just in case we got a lot of downloads.

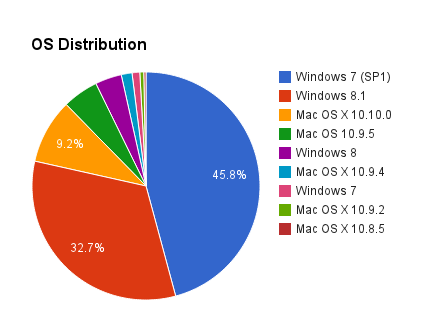

We used Unity’s free GameAnalytics package in Roculus. We didn’t track much – I mostly wanted to see if it was a game that people came back to often (which could indicate it’s a game people enjoy showing their friends). While I’m happy to report that a near-majority of players boot up Roculus on multiple days after their first download, I was surprised to see that the average rig was only running it at about 58 FPS. The game runs at a solid 75 FPS on my home computer with a GeForce 560, so I decided to do a bit of research on what our players were running. After looking through it and breaking it apart, I realized the data could offer a snapshot of the current Oculus community’s hardware for other devs to consider (click the images to enlarge):

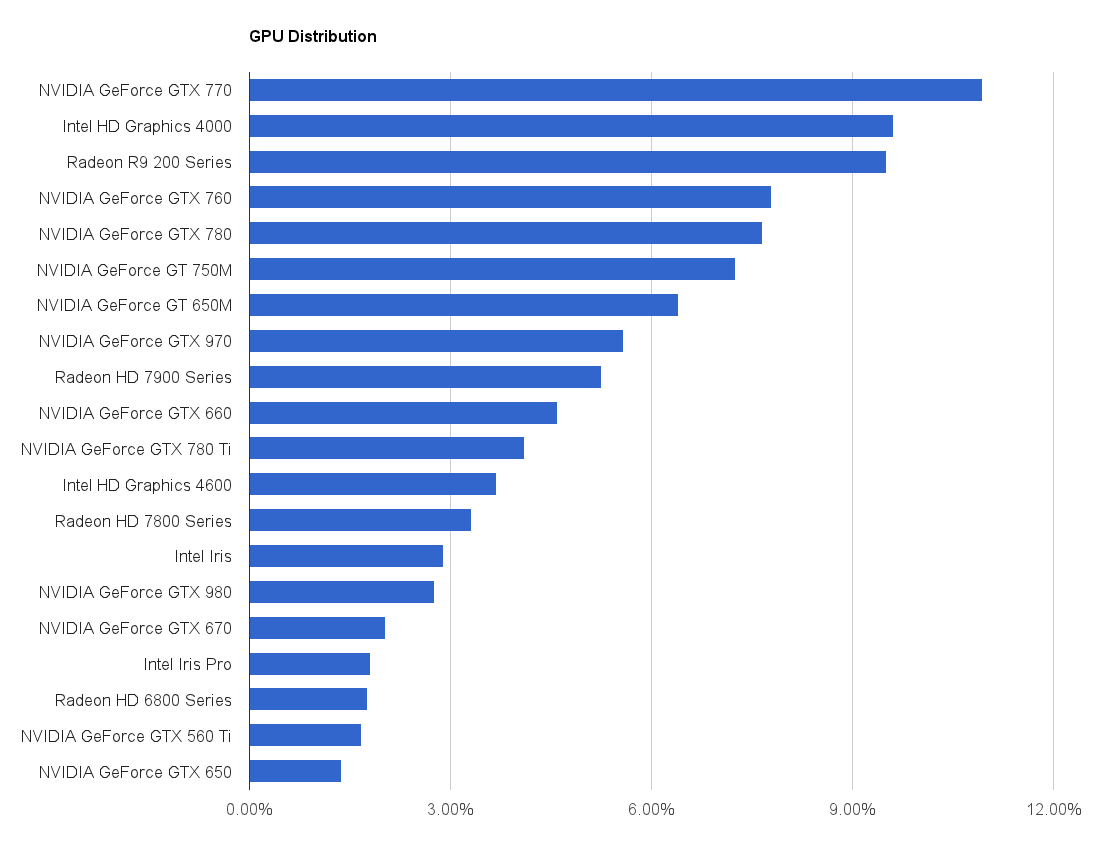

The 20 graphics setups listed above made up over 95% of the unique players we recorded, or around 8,000 players (as of November 15th). You might notice we didn’t get specific data for the Radeon cards – only the series numbers, which makes it less useful than the NVIDIA data, but we left it in their anyways to demonstrate the relative dominance of NVIDIA cards in our community.

The 20 graphics setups listed above made up over 95% of the unique players we recorded, or around 8,000 players (as of November 15th). You might notice we didn’t get specific data for the Radeon cards – only the series numbers, which makes it less useful than the NVIDIA data, but we left it in their anyways to demonstrate the relative dominance of NVIDIA cards in our community.

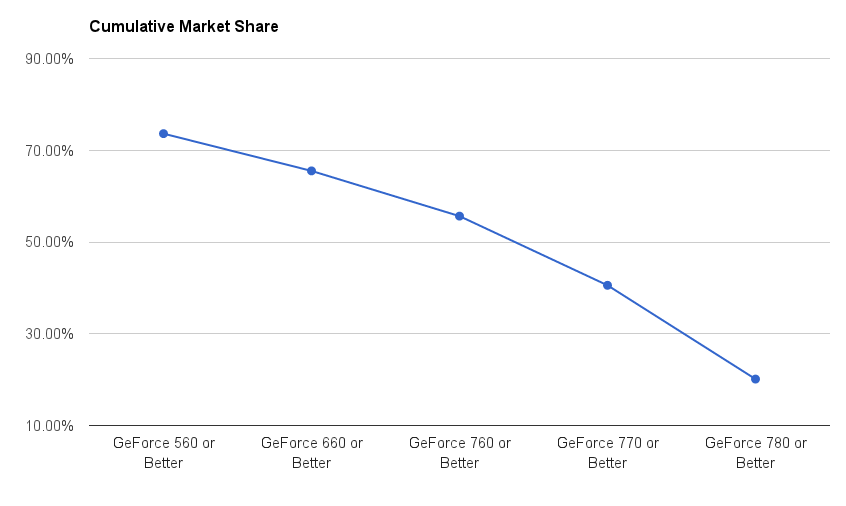

To make this data a bit more useful, I also organized it by “tiers” around common graphics cards based on their PassMark G3D Score (click to enlarge):

For much of the past year, the Oculus dev community called out the GeForce 770 as a “recommended” spec, but only about  40% of our players had a 770 or better. In targeting the GeForce 560 as the “low-end” spec, I know the game was capable of hitting framerate for just over 70% of our players.

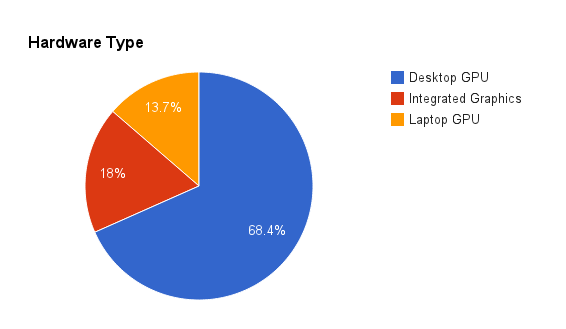

That said, I was surprised that nearly a third of our players were using laptops or computers without dedicated graphics cards to run the game. If we can’t rely on developers to have hardware with the recommended specs, it’s probably not going to be a lot easier for the mass market with CV1. It’s a good argument for targeting GearVR specs and optimizing the hell out of the things we’re making now.

At the same time, I wasn’t too surprised by the stubbornness of Intel HD 4000 series owners. I have that GPU in my 2012 MacBook Pro and I absolutely love the computer, but I found it to be barely capable for DK1 development and I never even bothered to try my DK2 with it. I suppose many Mac owners tend to think that recommended specs don’t apply to them, and although I’m regularly impressed with the performance I get out of my Macbook, this is one of those times where it simply isn’t up to task.

Roculus was only released for PC and Mac – we skipped Linux because, at the time, Linux was not supported by the Oculus Runtime required to use the DK2. About 20% of our players were on Macs, and most of them were on Macbook Pros or Mac Minis. I assumed they were responsible for bringing the average framerate down, but it wasn’t the whole story: the average framerate on Macs was 46 FPS, but the average FPS on Windows was still just 63 FPS. I feel really bad for the Mac users who tried running the game on their 5 year old laptops:

|

OS X Device

|

Average FPS

|

| iMac14,2 | 76.65Â (28.91%) |

| MacBookPro11,3 | 61.60Â (3.59%) |

| MacBookPro9,1 | 59.67Â (0.36%) |

| iMac14,3 | 59.32Â (-0.24%) |

| MacPro6,1 | 59.20Â (-0.45%) |

| iMac13,2 | 58.51Â (-1.60%) |

| iMac13,1 | 57.61Â (-3.11%) |

| MacBookPro10,1 | 54.32Â (-8.65%) |

| iMac12,2 | 53.61Â (-9.85%) |

| iMac15,1 | 49.08Â (-17.46%) |

| MacBookPro11,2 | 48.24Â (-18.88%) |

| iMac12,1 | 47.05Â (-20.86%) |

| MacPro3,1 | 46.98Â (-21.00%) |

| iMac14,1 | 45.69Â (-23.16%) |

| MacBookPro8,2 | 45.63Â (-23.26%) |

| MacPro4,1 | 44.77Â (-24.70%) |

| MacBookPro8,3 | 43.91Â (-26.16%) |

| iMac11,3 | 40.77Â (-31.43%) |

| MacPro5,1 | 39.84Â (-32.99%) |

| Macmini5,2 | 26.64Â (-55.20%) |

| iMac11,2 | 26.11Â (-56.09%) |

| iMac14,4 | 25.86Â (-56.52%) |

| iMac10,1 | 25.29Â (-57.47%) |

| iMac9,1 | 24.88Â (-58.17%) |

| MacBookPro11,1 | 22.92Â (-61.45%) |

| Macmini6,2 | 22.72Â (-61.79%) |

| MacBookAir6,1 | 22.58Â (-62.02%) |

| MacBookPro9,2 | 22.30Â (-62.50%) |

| MacBookPro6,2 | 20.61Â (-65.34%) |

| MacBookAir6,2 | 20.58Â (-65.39%) |

| MacBookPro6,1 | 20.10Â (-66.20%) |

| Macmini6,1 | 20.03Â (-66.31%) |

| MacBookAir5,1 | 18.89Â (-68.23%) |

| MacBookPro10,2 | 18.54Â (-68.82%) |

| MacBookAir5,2 | 18.34Â (-69.16%) |

| MacBookPro5,3 | 17.50Â (-70.57%) |

| iMac7,1 | 15.00Â (-74.77%) |

| MacBookPro7,1 | 14.19Â (-76.13%) |

| MacBookPro5,5 | 10.15Â (-82.93%) |

| MacBookAir4,2 | 9.78Â (-83.55%) |

| MacBookPro5,2 | 9.54Â (-83.95%) |

| MacBookPro8,1 | 9.22Â (-84.49%) |

| Macmini3,1 | 8.00Â (-86.55%) |

| Macmini5,1 | 8.00Â (-86.55%) |

| MacBook6,1 | 3.75Â (-93.69%) |

So why were Windows machines still under the target framerate by a significant margin? This is where the data falls apart into a series of questions without answers. I didn’t know how to track whether people were using Direct Mode or Extended Mode, so I can’t see the correlation to framerate there. It’s possible that some people running in Extended Mode got capped at 60 FPS instead of 75 FPS. It’s possible that the game simply didn’t run as well on other people’s rigs as I thought, or that people were using different versions of the Oculus Runtime. There are way too many unknowns to determine exactly why the game didn’t hit framerate for everyone.

I know that it did hit framerate for nearly 3/4 of our players, but hopefully we can get that number up before Cv1 releases!

This is great data thank you very much for posting it.

I have to say I am one of those who downloaded your game and although I thought the game was fun for a little and a good demo of the headtracking uses it got boring preety quick.

Great stuff to make in a weekend though and good addition with the GamaAnalytics script, thanks you have inspired me to try harder at making a good immersive unity game of my own.

This is super interesting info. I’m a dev making a rift demo for both mac and PC and this kind of data gives me some reassurance that I’ll get ok performance from a good spectrum of the target audience. And perhaps a way to check this sort of thing out myself. I’m also in Unity.

Thanks for sharing!

-j